Government Digital Service (GDS)

|

|

Help us give government better data about services

Blog posted by: Roxanne Asadi, 31 October 2016 — Measurement and analytics, Services Programme

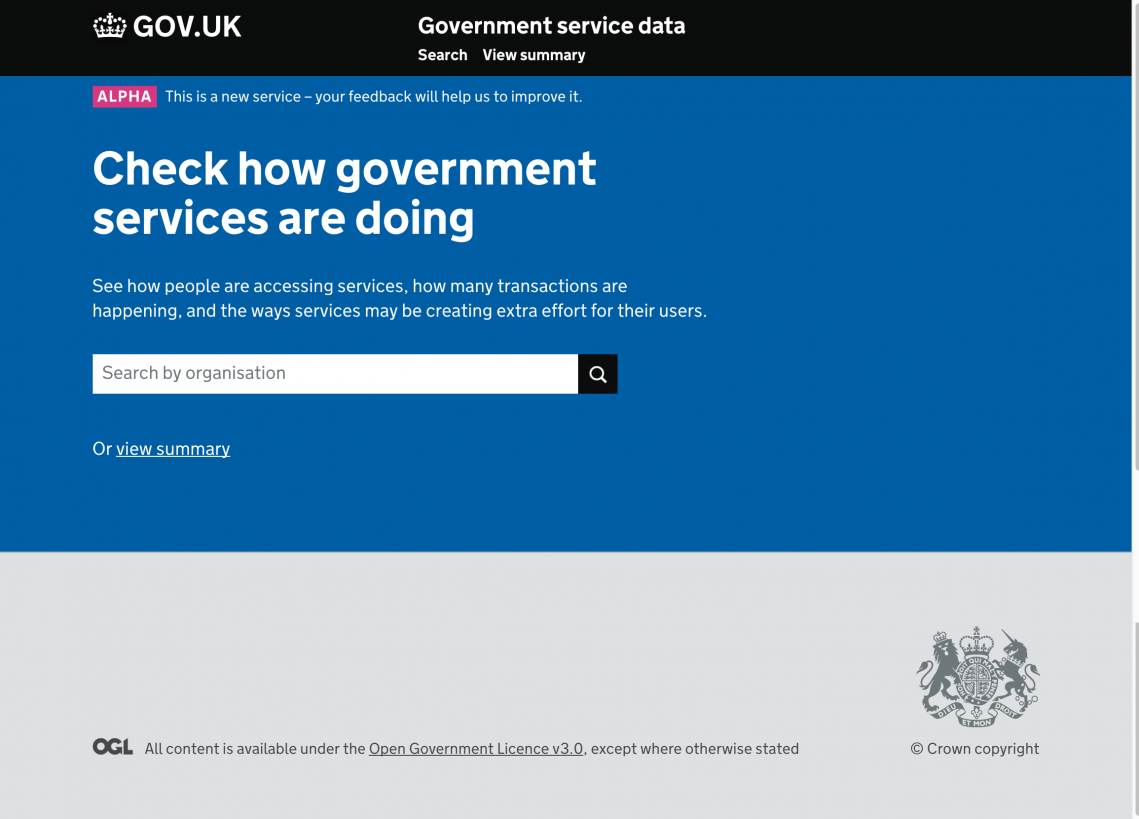

Front page of the prototype

The Performance Platform measures how government services are performing. The team that runs it made the decision to carry out a rediscoveryof its work and we blogged about it in June. We are exploring what data we should provide and how we should provide it to enable better decisions about government services.

We’ve been through a rediscovery and we’re now looking for user research participants and alpha partners to help us develop our prototype and improve the data we’re giving.

Here’s what we’ve done so far and what we plan to do in alpha.

Running the rediscovery

One of the roles of GDS is to help the rest of government to make great services. We wanted to know what part we could play in this.

We wanted to know what problems people were trying to solve with data. We wanted to know if we were continuing to meet users’ needs and, if not, we wanted to know what we should do about it.

So we ran a rediscovery. We ran this in parallel with our work to maintain the Performance Platform. We haven’t changed anything or switched anything off. The work we’re doing is to outline our future direction.

You can read more about our user research, and more about some of the outputs.

What we learned

When we were looking at user needs, we focused on people in strategic roles. These might be people who are responsible for a number of services, or people who have a strategic decision-making role in departments.

We found some high-level needs for these users. They need to:

- know what’s happening with services across government so that they can prioritise where to intervene

- be able to share best practice so that they can help government better meet user needs

- be able to demonstrate the benefits of their strategies so that they can show their value

Our research also confirmed that the data that we give to people needs to be reliable and standardised. And people need to be able to understand the data we provide so that they can use it properly.

Metrics

Through our initial research, we identified 3 main useful metrics: the breakdown of transactions per channel, the number of unique users for a service, and the reason a user contacts a service or has to repeat or amend something.

At the moment the Performance Platform has ‘user satisfaction’ to indicate service quality. This is measured by surveying users as they complete transactions. There are several issues with this. A very small number of people respond to it and it only collects responses from successful online services. It can be hard to know if someone is happy with the service itself, or just happy about their personal outcome from it.

We’re looking at the reasons why users contact a service as a possible alternative. We worked to see if we could categorise different types of user contact. We worked to see whether we could differentiate the kind of contact services expect from a user – for example to get support to use a service – from when a user is getting in contact because of a problem with the service. We found that making these groupings was very complex and subjective.

Instead we found that we could group reasons for phone calls and reasons for people having to repeat and amend things without assigning value judgements to them. This means users of the data can make their own judgement calls on the success of a service.

Prototyping. And prototyping again

People weren’t really using the graphs of the data we provided. This is because they liked to take the data and combine it with other information to create a complete picture.

So our first prototype focused on data without much visualisation. We tested a prototype of a spreadsheet to find out if the metrics and other information we had developed were the right ones. This helped us to eliminate some metrics. It also showed us that users who initially didn’t use graphs did need some visualisation. A spreadsheet wasn’t enough.

For our second prototype, we focused on how we should group the data, so that it was most useful for our users. Our research found that users are interested in how ‘transactions’ are grouped into end-to-end services.

So as well as the usual department and agency groupings, we also tried out ‘themes and topics’ groupings around things like driving and pensions. We found that while people were interested in these groupings, we need to refine them further, so that they are as useful as possible.

Looking for alpha partners

The research results have helped us prioritise user contact across channels for our minimum viable product. We’re building a prototype so that we can test this.

We’re looking for partners for the alpha prototyping. These partners will help us to shape the way we collect and report on government service data. Initially, we want to partner with one service, helping them to provide the data and build our knowledge to support our future technical decisions.

If you’re interested in being an alpha partner for this, or in taking part in our user research sessions, get in touch by emailing performance-platform@digital.cabinet-office.gov.uk

Follow GDS on Twitter, and don’t forget to sign up for email alerts.

The GDS mission: support, enable and assure